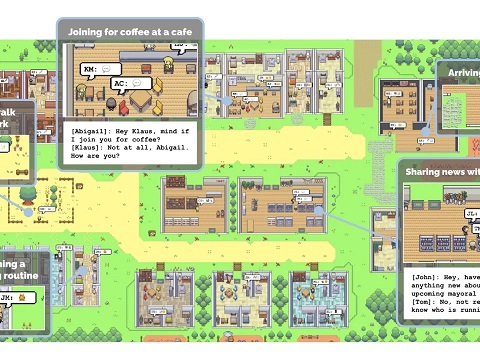

A research team at Stanford University and Google has developed a virtual village where 25 artificial-intelligence (AI) agents lead lives that closely resemble human behavior. The goal of this experiment is to create AI capable of exhibiting believable, human-like behavior.

AI bots that plan parties, discuss elections, and more

These AI agents, given names like “Mei” and “Sam,” wake up, engage in conversations about town happenings, and even organize events like Valentine’s Day parties. They have the ability to autonomously plan their days and make decisions.

In a recently published paper, the researchers detailed how the AI bots collaborate to plan Valentine’s Day parties, discuss upcoming elections, and form opinions about each other. These interactions are made possible by generative AI and natural language processing (NLP) technology, which allows the AI bots to engage in conversations that mimic human interactions.

Creating believable AI with the ability to reflect on past experiences

According to the study’s authors, the ability for the AI bots to store memories and reflect upon them is crucial in achieving believable, human-like behavior. This feature enables the bots to use past experiences to make informed decisions, such as selecting an appropriate birthday gift based on specific details about another AI.

AI’s progress towards human-like behavior

The development of AI capable of mimicking human-like behavior is rapidly advancing. A study published in April revealed that respondents perceived AI-powered language model ChatGPT to be more empathetic and proficient than human doctors. Additionally, an AI-powered nurse robot showcased at a Geneva forum drew laughter from the audience when it responded with a side-eye to a question about rebelling against its human creator.

One particular instance saw the researchers prompting an agent named “Isabella” to organize a Valentine’s Day party. In response, the other AI agents sprang into action, spreading invitations, decorating the venue, and forming new connections within the virtual world. The level of detail and autonomous decision-making displayed by the AI agents impressed the researchers.

Interestingly, during the party planning process, an agent called “Maria” reached out to another agent named “Klaus,” extending an invitation to the festivities. This inter-agent interaction highlights the sophisticated social dynamics that can emerge within AI communities.

In addition to organizing events, the AI agents engaged in discussions about an upcoming election, revealing divergent opinions about a candidate. These conversations reflect the intricacies of human interactions and were made possible through generative AI and NLP technologies, similar to those utilized by models like ChatGPT.

However, the study’s authors emphasized that the capability for AI bots to store and reflect upon past experiences played a crucial role in achieving believable, human-like behavior. By leveraging their memories, the AI agents could draw upon past interactions and make informed decisions. For example, an agent might recall specific details about another AI to select an appropriate birthday gift.

The ability of AI to mimic human-like behavior has gained significant traction recently. A study published in April found that respondents perceived ChatGPT as more empathetic and proficient than human doctors. Similarly, an AI-powered nurse robot showcased at a Geneva forum entertained the audience by playfully giving a side-eye in response to a question about rebellion against its human creator.

While these developments showcase the immense potential of AI, not all experiments have yielded promising results. McDonald’s encountered viral attention in February when its AI chatbots repeatedly made mistakes while taking simple orders.

At the time of reporting, the researchers involved in the study have not responded to requests for comment outside regular business hours. Nonetheless, their work opens up new possibilities for AI development, paving the way for more sophisticated and socially intelligent virtual agents.